Convolutions in 1D

As mentioned in the introductory section for convolutions, convolutions allow mathematicians to "blend" two seemingly unrelated functions; however, this definition is not very rigorous, so it might be better to think of a convolution as a method to apply a filter to a signal or image. This, of course, brings up more questions: what is a filter? What is a signal? How is this all related to images?

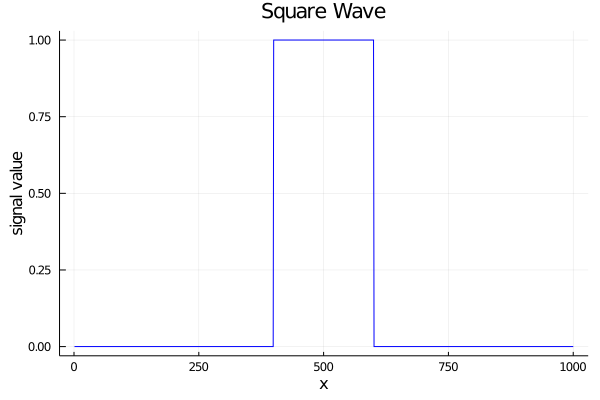

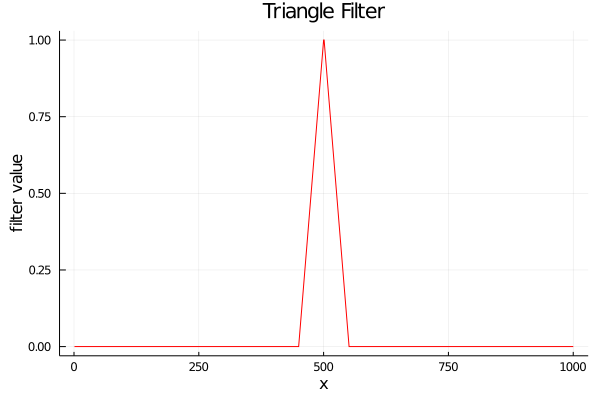

For this, we will start with some predefined signal. It does not matter too much what it is, so we will pick a square wave where everything is set to zero except for a few elements at the center, which will be set to one. This signal can be treated as an array, or a black and white, one-dimensional image where everything is black except for a white strip at the center. We will also introduce a filter, which will be a simple triangle wave that goes to 1. Both of these are shown below:

So now we have a signal and a filter.

How do we apply the filter to the signal?

The easiest way to do this is to iterate through every point in the signal and blend it with neighboring elements, where each neighboring element is weighted based on the filter value.

So in the case where the triangle wave is only 3 elements ([0.5, 1, 0.5]), the output at each point would be

where is the output value, is the input array (a signal or image), and is an iterable element through that signal. In this way, the "application of a filter," is simply a multiplication of the triangle wave centered around each point of the input array, followed by in integral or sum of the output. In some sense, this means we will shift the filter, then multiply and sum every step. This can be seen in the following animation:

Here, the purple, dashed line is the output convolution , the vertical line is the iteration , the blue line is the original signal, the red line is the filter, and the green area is the signal multiplied by the filter at that location. The convolution at each point is the integral (sum) of the green area for each point.

If we extend this concept into the entirety of discrete space, it might look like this:

Where f[n] and g[n] are arrays of some form.

This means that the convolution can calculated by shifting either the filter along the signal or the signal along the filter.

This can be read as we said before: every step, we shift the filter, multiply, and sum.

There is, of course, a small caveat here.

Why are we subtracting ?

Certainly, if we wanted to "shift the filter along the signal," we could also do so by adding instead, but that is actually an entirely separate operation known as a correlation, which will be discussed at a later time.

The simplest interpretation for this equation is the same as the animation: we reverse the second array, and move it through the first array one step at a time, performing a simple element-wise multiplication and summation at each step. With this in mind, we can almost directly transcribe the discrete equation into code like so:

function convolve_linear(signal::Array{T, 1}, filter::Array{T, 1},

output_size) where {T <: Number}

# convolutional output

out = Array{Float64,1}(undef, output_size)

sum = 0

for i = 1:output_size

for j = max(1, i-length(filter)):i

if j <= length(signal) && i-j+1 <= length(filter)

sum += signal[j] * filter[i-j+1]

end

end

out[i] = sum

sum = 0

end

return out

end

static double[] ConvolveLinear(double[] signal, double[] filter, int outputSize)

{

// Convolutional output.

var output = new double[outputSize];

var sum = 0.0;

for (var i = 0; i < outputSize; i++)

{

for (var j = Math.Max(0, i - filter.Length); j <= i; j++)

{

if (j < signal.Length && (i - j) < filter.Length)

{

sum += signal[j] * filter[i - j];

}

}

output[i] = sum;

sum = 0.0;

}

return output;

}

def convolve_linear(signal, filter_array, output_size):

out = np.zeros(output_size)

s = 0

for i in range(output_size):

for j in range(max(0, i - len(filter_array)), i + 1):

if j < len(signal) and (i - j) < len(filter_array):

s += signal[j] * filter_array[i - j]

out[i] = s

s = 0

return out

The easiest way to reason about this code is to read it as you might read a textbook.

For each element in the output domain, we are summing a certain subsets of elements from i-length(filter) to i after multiplying it by the reversed filter (filter[i-j]).

In this way, it is precisely the same as the mathematical notation mentioned before.

In contrast to the animation, where the filter continuously reappears on the left edge of the screen, the code we have written for this part of the chapter requires the user to specify what they expect the output array length to be. Determining what should happen at the edges of the convolution is a somewhat hotly debated topic and differs depending on what the user actually wants, so we will be discussing this in greater detail later in this chapter.

As an important note, if we were to extend the convolution into continuous space, we might write something like:

Note that in this case, and are not necessarily spatial elements, but the interpretation is otherwise the same as before.

At this stage, the mathematics and code might still be a little opaque, so it is a good idea to play around a bit and think about how this operation might be used in practice with a few different filters.

Playing with filters

Honestly, the best way to learn how convolutions work is by using them for a number of different signals and filters, so let us extend the previous triangle filter a bit further by convolving a square wave with a relatively sharp Gaussian, which can be seen in the following animation:

In practice, the convolutional output here is very similar to the triangle wave we showed before. The final convolved image looks a lot like the square, except that its boundaries have been smoothed out or "blurred." In practice whenever a Gaussian filter is used, it will always blur the other convolved signal, which is why a convolution with a Gaussian is also called a blurring operation. This operation is used very often when dealing with two-dimensional images, and we will discuss common kernels found in the wild in the next section. Still, it is interesting to see the blurring operation in action by convolving a random distribution with a larger Gaussian filter:

In this animation, the final convolution is so blurred that it does not seem related to the random input signal at all! In fact, this animation seems to blend much more when compared to the previous Gaussian and the triangle wave animations. This is because the Gaussian is wider than the previous to filters. In general, the wider the filter, the stronger the blurring effect.

So what happens if we convolve a Gaussian with another Gaussian? Well, that is shown below:

As one might expect, the output is a blurrier Gaussian, which is essentially just wider. If you were paying particularly close attention to the visualization, you might have noticed that the green area inside this visualization does not properly line up with the overlap of the two arrays. Don't worry! This is exactly what should happen! Remember that the convolution requires a multiplication of the signal and filter, which was the same as the overlap when the signal was a square wave; however, in the case of two distinct signals, we should expect the multiplied output to look somewhat distinct.

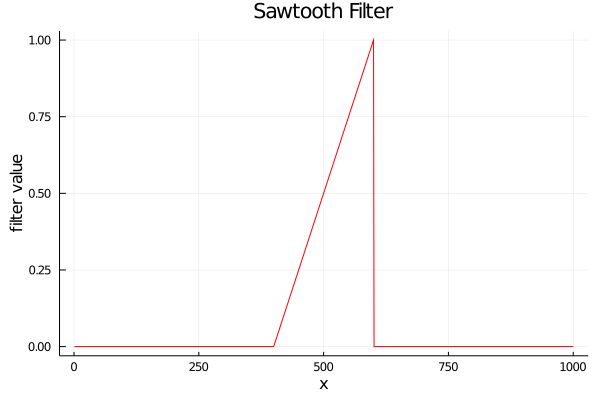

Let us extend this concept to one final example of a square wave convolved with a triangular, sawtooth function that looks like this:

This is the first non-symmetric filter of this chapter, and its convolution would look like this:

Non-symmetric filters are useful for testing convolutions to ensure that the output is correct, so it might be worthwhile to linger on this animation for a bit longer. Notice how the convolution has an accelerating, positive slope when the reversed sawtooth function interacts with the square. This makes sense as the smallest part of the triangle interacts first. Similarly, there is a negatively accelerating slope when the sawtooth function leaves the square.

Dealing with boundaries

In all of the animations, we have shown the filter constantly reappearing on the left edge of the screen, which is not always the best thing to do at the boundaries. In fact, these boundary conditions are somewhat non-trivial to code, so for this section, we will start with relatively simple boundary conditions that were introduced in the previous code example.

Simple boundaries

In general, if a user wants to see a full convolution between two signals, the output size must be the size of the two signals put together, otherwise, we cannot iterate through the entire convolutional output domain. For example, here is random noise again convolved with a Gaussian function, but with non-periodic boundaries:

This shows the full, unbounded convolution of the two signals, where we clearly see a "ramp up" and "ramp down" phase at the start and end of the animation. That said, there are many applications where the user actually needs to specify the output domain to be another length, such as the size of one of the input signals.

In this case, the simplest boundary would be to assume that whenever the filter hits the end of the image, it simply disappears. Another way to think about this is that the signal only exists for the domain we specify it over, and is all 0s outside of this domain; therefore, the filter does not sum any signal from elements beyond its scope. As an example, let's take the same example as before:

Similar to the case without boundary conditions, this convolution needs to "ramp up," but it does not need to "ramp down." This is because the convolution output no longer extends past the bounds of the original signal so the bounded convolution is a subset of the full convolution. More than that, the convolution does not go all the way to 0 on the right side. This means that we are actually ignoring a rather important part of the convolution!

This is 100% true; however, if the signal is large and the filter is small (as is the case with most of image processing), we do not really care that much about the bits of the convolution we missed. In addition, there is a way to center the convolution by modifying the location where the filter starts. For example, we could have half of the filter already existing and overlapping with the signal for the very first computed point of the convolution. For this reason, simple bounds are used frequently when performing convolutions on an image.

In the previous code snippet, we were able to perform both a bounded and unbounded convolution. Here it is again for clarity:

function convolve_linear(signal::Array{T, 1}, filter::Array{T, 1},

output_size) where {T <: Number}

# convolutional output

out = Array{Float64,1}(undef, output_size)

sum = 0

for i = 1:output_size

for j = max(1, i-length(filter)):i

if j <= length(signal) && i-j+1 <= length(filter)

sum += signal[j] * filter[i-j+1]

end

end

out[i] = sum

sum = 0

end

return out

end

static double[] ConvolveLinear(double[] signal, double[] filter, int outputSize)

{

// Convolutional output.

var output = new double[outputSize];

var sum = 0.0;

for (var i = 0; i < outputSize; i++)

{

for (var j = Math.Max(0, i - filter.Length); j <= i; j++)

{

if (j < signal.Length && (i - j) < filter.Length)

{

sum += signal[j] * filter[i - j];

}

}

output[i] = sum;

sum = 0.0;

}

return output;

}

def convolve_linear(signal, filter_array, output_size):

out = np.zeros(output_size)

s = 0

for i in range(output_size):

for j in range(max(0, i - len(filter_array)), i + 1):

if j < len(signal) and (i - j) < len(filter_array):

s += signal[j] * filter_array[i - j]

out[i] = s

s = 0

return out

Here, the main difference between the bounded and unbounded versions is that the output array size is smaller in the bounded case. For an unbounded convolution, the function would be called with a the output array size specified to be the size of both signals put together:

# full convolution, output will be the size of x + y - 1

full_linear_output = convolve_linear(x, y, length(x) + length(y) - 1)

// Full convolution, output will be the size of x + y - 1.

var fullLinearOutput = ConvolveLinear(x, y, x.Length + y.Length - 1);

# full convolution, output will be the size of x + y - 1

full_linear_output = convolve_linear(x, y, len(x) + len(y) - 1)

On the other hand, the bounded call would set the output array size to simply be the length of the signal

# simple boundaries

simple_linear_output = convolve_linear(x, y, length(x))

// Simple boundaries.

var simpleLinearOutput = ConvolveLinear(x, y, x.Length);

# simple boundaries

simple_linear_output = convolve_linear(x, y, len(x))

Finally, as we mentioned before, it is possible to center bounded convolutions by changing the location where we calculate the each point along the filter. This can be done by modifying the following line:

for j = max(1, i-length(filter)):i

for (var j = Math.Max(0, i - filter.Length); j <= i; j++)

for j in range(max(0, i - len(filter_array)), i + 1):

Here, j counts from i-length(filter) to i.

To center the convolution, it would need to count from i-(length(filter)/2) to i+(length(filter)/2) instead.

I think this is a good place to stop discussions on simple boundary conditions. Now let us talk a bit more in detail about the case where we want the filter to continuously reappear every loop. This case is known as the "periodic boundary condition" and was used for the visualizations at the start of this chapter.

Periodic boundary conditions

Though periodic boundary conditions are more complicated that those mentioned in the previous section, they are still relatively straightforward to implement. With these conditions, the filter will wrap itself around to the other end of the signal whenever it hits a boundary. In this way, the signal is periodic, with an identical copy of itself acting as left and right neighbors. Those neighbors then have other neighbors, and those then have more neighbors, creating a sea of signals extending to infinity and beyond in both directions. For us, this means that when the filter leaves one edge of the domain, it simply appears on the other, opposite edge.

This particular convolution is known as a cyclic convolution and is also the most common output of convolutions that work via the convolutional theorem, which will be discussed in another section. For clarity: here is the same cyclic visualization we showed above with a random distribution and a Gaussian signal.

In code, this typically amounts to using some form of modulus operation, as shown here:

function convolve_cyclic(signal::Array{T, 1},

filter::Array{T, 1}) where {T <: Number}

# output size will be the size of sign

output_size = max(length(signal), length(filter))

# convolutional output

out = Array{Float64,1}(undef,output_size)

sum = 0

for i = 1:output_size

for j = 1:output_size

sum += get(signal, mod1(j, output_size), 0) * get(filter, mod1(i-j, output_size), 0)

end

out[i] = sum

sum = 0

end

return out

end

static double[] ConvolveCyclic(double[] signal, double[] filter)

{

var outputSize = Math.Max(signal.Length, filter.Length);

// Convolutional output.

var output = new double[outputSize];

var sum = 0.0;

for (var i = 0; i < outputSize; i++)

{

for (var j = 0; j < outputSize; j++)

{

if (Mod(i - j, outputSize) < filter.Length)

{

sum += signal[Mod(j - 1, outputSize)] * filter[Mod(i - j, outputSize)];

}

}

output[i] = sum;

sum = 0.0;

}

return output;

}

def convolve_cyclic(signal, filter_array):

output_size = max(len(signal), len(filter_array))

out = np.zeros(output_size)

s = 0

for i in range(output_size):

for j in range(output_size):

if(mod1(i - j, output_size) < len(filter_array)):

s += signal[mod1(j - 1, output_size)] * filter_array[mod1(i - j, output_size)]

out[i] = s

s = 0

return out

This is essentially the same as before, except for the modulus operations, which allow us to work on a periodic domain.

As a final note before continuing: dealing with boundaries is tricky business and can dramatically change the behavior of the output convolution. For this reason, it is important to think about what types of boundaries will work best for what you, the programmer, actually need. The selection of boundary conditions will be a common trope for a large portion of computer graphics and physics algorithms where researchers often need to present and simulate data on an array of some sort.

Example Code

For the code associated with this chapter, we have used the convolution to generate a few files for the full convolution, along with the periodic and simple boundary conditions discussed in this chapter.

using DelimitedFiles

using LinearAlgebra

function convolve_cyclic(signal::Array{T, 1},

filter::Array{T, 1}) where {T <: Number}

# output size will be the size of sign

output_size = max(length(signal), length(filter))

# convolutional output

out = Array{Float64,1}(undef,output_size)

sum = 0

for i = 1:output_size

for j = 1:output_size

sum += get(signal, mod1(j, output_size), 0) * get(filter, mod1(i-j, output_size), 0)

end

out[i] = sum

sum = 0

end

return out

end

function convolve_linear(signal::Array{T, 1}, filter::Array{T, 1},

output_size) where {T <: Number}

# convolutional output

out = Array{Float64,1}(undef, output_size)

sum = 0

for i = 1:output_size

for j = max(1, i-length(filter)):i

if j <= length(signal) && i-j+1 <= length(filter)

sum += signal[j] * filter[i-j+1]

end

end

out[i] = sum

sum = 0

end

return out

end

function main()

# sawtooth functions for x and y

x = [float(i)/200 for i = 1:200]

y = [float(i)/200 for i = 1:200]

# Normalization is not strictly necessary, but good practice

normalize!(x)

normalize!(y)

# full convolution, output will be the size of x + y - 1

full_linear_output = convolve_linear(x, y, length(x) + length(y) - 1)

# simple boundaries

simple_linear_output = convolve_linear(x, y, length(x))

# cyclic convolution

cyclic_output = convolve_cyclic(x, y)

# outputting convolutions to different files for plotting in external code

writedlm("full_linear.dat", full_linear_output)

writedlm("simple_linear.dat", simple_linear_output)

writedlm("cyclic.dat", cyclic_output)

end

using System;

using System.IO;

namespace Convolution1D

{

public class Convolution1D

{

// Creates a sawtooth function with the given length.

static double[] CreateSawtooth(int length)

{

var array = new double[length];

for (var i = 0; i < length; i++)

array[i] = (i + 1) / 200f;

return array;

}

// Normalizes the given array.

static void Normalize(double[] array)

{

var norm = Norm(array);

for (var i = 0; i < array.Length; i++)

array[i] /= norm;

}

// Calculates the norm of the array.

static double Norm(double[] array)

{

var sum = 0.0;

for (var i = 0; i < array.Length; i++)

sum += Math.Pow(array[i], 2);

return Math.Sqrt(sum);

}

// Modulus function which handles negative values properly.

// Assumes that y >= 0.

static int Mod(int x, int y) => ((x % y) + y) % y;

static double[] ConvolveCyclic(double[] signal, double[] filter)

{

var outputSize = Math.Max(signal.Length, filter.Length);

// Convolutional output.

var output = new double[outputSize];

var sum = 0.0;

for (var i = 0; i < outputSize; i++)

{

for (var j = 0; j < outputSize; j++)

{

if (Mod(i - j, outputSize) < filter.Length)

{

sum += signal[Mod(j - 1, outputSize)] * filter[Mod(i - j, outputSize)];

}

}

output[i] = sum;

sum = 0.0;

}

return output;

}

static double[] ConvolveLinear(double[] signal, double[] filter, int outputSize)

{

// Convolutional output.

var output = new double[outputSize];

var sum = 0.0;

for (var i = 0; i < outputSize; i++)

{

for (var j = Math.Max(0, i - filter.Length); j <= i; j++)

{

if (j < signal.Length && (i - j) < filter.Length)

{

sum += signal[j] * filter[i - j];

}

}

output[i] = sum;

sum = 0.0;

}

return output;

}

static void Main()

{

// Create sawtooth functions for x and y.

var x = CreateSawtooth(200);

var y = CreateSawtooth(200);

// Normalization is not strictly necessary, but good practice.

Normalize(x);

Normalize(y);

// Full convolution, output will be the size of x + y - 1.

var fullLinearOutput = ConvolveLinear(x, y, x.Length + y.Length - 1);

// Simple boundaries.

var simpleLinearOutput = ConvolveLinear(x, y, x.Length);

// Cyclic convolution.

var cyclicOutput = ConvolveCyclic(x, y);

// Output convolutions to different files for plotting.

File.WriteAllText("full_linear.dat", String.Join(Environment.NewLine, fullLinearOutput));

File.WriteAllText("simple_linear.dat", String.Join(Environment.NewLine, simpleLinearOutput));

File.WriteAllText("cyclic.dat", String.Join(Environment.NewLine, cyclicOutput));

}

}

}

import numpy as np

def mod1(x, y): return ((x % y) + y) % y

def convolve_cyclic(signal, filter_array):

output_size = max(len(signal), len(filter_array))

out = np.zeros(output_size)

s = 0

for i in range(output_size):

for j in range(output_size):

if(mod1(i - j, output_size) < len(filter_array)):

s += signal[mod1(j - 1, output_size)] * filter_array[mod1(i - j, output_size)]

out[i] = s

s = 0

return out

def convolve_linear(signal, filter_array, output_size):

out = np.zeros(output_size)

s = 0

for i in range(output_size):

for j in range(max(0, i - len(filter_array)), i + 1):

if j < len(signal) and (i - j) < len(filter_array):

s += signal[j] * filter_array[i - j]

out[i] = s

s = 0

return out

# sawtooth functions for x and y

x = [float(i + 1)/200 for i in range(200)]

y = [float(i + 1)/200 for i in range(200)]

# Normalization is not strictly necessary, but good practice

x /= np.linalg.norm(x)

y /= np.linalg.norm(y)

# full convolution, output will be the size of x + y - 1

full_linear_output = convolve_linear(x, y, len(x) + len(y) - 1)

# simple boundaries

simple_linear_output = convolve_linear(x, y, len(x))

# cyclic convolution

cyclic_output = convolve_cyclic(x, y)

# outputting convolutions to different files for plotting in external code

np.savetxt('full_linear.dat', full_linear_output)

np.savetxt('simple_linear.dat', simple_linear_output)

np.savetxt('cyclic.dat', cyclic_output)

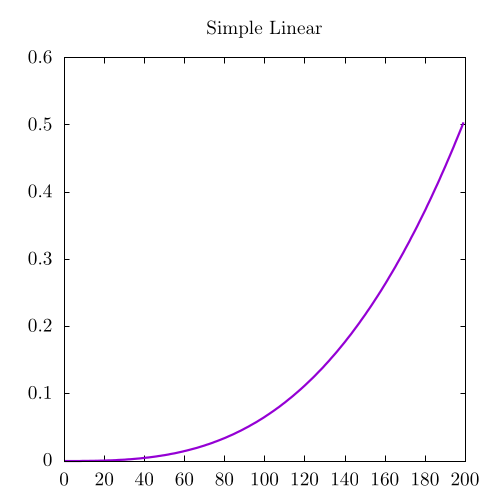

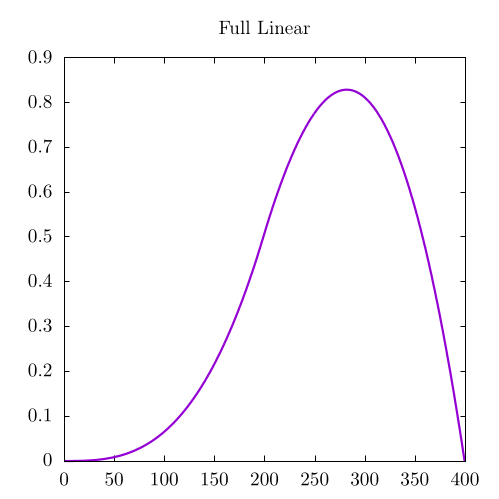

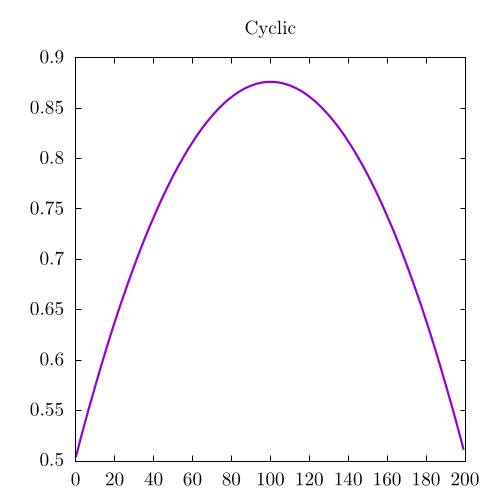

At a test case, we have chosen to use two sawtooth functions, which should produce the following images:

| Description | Image |

|---|---|

| Simple Boundaries |  |

| Full |  |

| Cyclic |  |

As a sanity check, make sure that the bounded convolution is a subset of the full convolution. In this example, the bounded convolution is the start of the full convolution, but it is entirely possible it could be the middle or somewhere else entirely depending on how you counted within the inner, summation loop for the convolution.

License

Code Examples

The code examples are licensed under the MIT license (found in LICENSE.md).

Images/Graphics

- The image "Square Wave" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The image "Triangle Wave" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Triangle Square Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Gaussian Square Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Gaussian Random Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Double Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The image "Sawtooth Wave" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Sawtooth Square Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Full Random Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The video "Simple Random Convolution" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The image "Simple Linear" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The image "Full Linear" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

- The image "Cyclic" was created by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

Text

The text of this chapter was written by James Schloss and is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License.

Pull Requests

After initial licensing (#560), the following pull requests have modified the text or graphics of this chapter:

- none